MIT 6.824: Lecture 11 - Cache Consistency, Frangipani

· 6 min readThe ideal distributed file system would guarantee that all its users have coherent access to a shared set of files and be easily scalable. It would also be fault-tolerant and require minimal human administration.

Frangipani is a distributed file system that approximates this ideal by providing a consistent view of shared files while maintaining a cache for each user, offering the ability to scale up by adding new Frangipani servers, being able to recover automatically from server failures, and providing easy administration.

The post will focus on how Frangipani maintains cache coherence through the interaction between its two-layer structure and a distributed lock service.

Table of Contents

- Frangipani

- Conclusion

- Further Reading

Frangipani #

Overview #

Frangipani is built on top of a distributed storage service named Petal, which provides virtual disks to its clients. Petal’s virtual disks are similar to physical disks in the way that data is written and read in blocks. It also provides the option to replicate data for high availability. Much of Frangipani’s abilities to be fault-tolerant, scalable and provide easy administration are inherited from Petal.

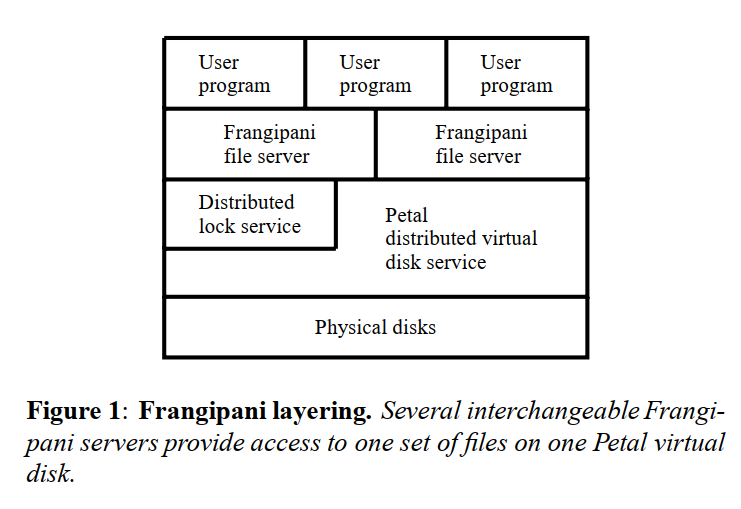

A typical setup consists of multiple Frangipani servers running on top of a shared Petal virtual disk as shown in Figure 1 below.

Frangipani maintains a write-back cache #

Frangipani was built for a use case where all the servers are under a common administration e.g. a research lab with collaborating users. In such an environment, each user’s workstation will have a Frangipani file server module sitting below the user programs.

In this scenario, most of the operations will involve a user accessing their files. Frangipani makes these operations fast by maintaining a write-back cache on each workstation. However, a user may occasionally want to access files written by another user, or even access their files on another workstation. The goal in these cases is that the operations are correct. That is, we want every read for a file from one workstation to see the latest write to that file, despite the file being in another workstation’s cache. Herein lies the challenge of cache coherence: how can we keep the data across multiple caches consistent?

Next up, we’ll discuss Frangipani’s approach to solving this problem.

Synchronization and Cache Coherence #

Frangipani uses multiple-reader/single-writer locks #

When a Frangipani server wants to read a file or directory, it requests for a read lock on the object which enables the server to load relevant data from disk into its cache.

Similarly, a server updates a file or directory by requesting for a write lock, after which it can read or write associated data from the disk and cache it.

Multiple servers can hold read locks for an object, but those locks must be released before a write lock request can be granted. A Frangipani server gets a lease from the lock service when a lock request is granted, and it must continually renew this lease before a specified expiration time. Otherwise, the lock server will mark it as failed and reallocate the locks.

Dealing with conflicts #

A read lock holder must invalidate its cache entries #

When a conflicting lock request comes in for a file/directory, the lock service asks the current lock holder to release its lock. For a server holding a read lock, it will be asked to release its lock if a write lock request comes in. When this happens, the server must invalidate the object's entry in its cache before complying. This ensures that the server must fetch fresh data from the disk for any subsequent reads to that file or directory.

A write lock holder must flush its cache entries #

A server holding a write lock may be asked to release its lock or downgrade it to a read lock. When that happens, it must first flush the dirty data to disk before complying. Note that if it is only downgrading its lock, it can still keep the cached data since no other server will update it. The upshot of this is that the cached copy of a server’s disk block can differ from the on-disk version only if it holds the write lock for that block.

The locking protocol ensures cache coherence #

Summarizing this section with a quote from the paper:

Frangipani’s locking protocol ensures that updates requested to the same data by different servers are serialized. A write lock that covers dirty data can change owners only after the dirty data has been written to Petal, either by the original lock holder or by a recovery demon running on its behalf.

This protocol ensures that reads in Frangipani always see the latest writes, guaranteeing cache coherence.

Logging #

Frangipani keeps track of metadata updates in a write-ahead log to simplify failure recovery and improve the performance of the system. The paper defines metadata as any on-disk data structure other than the contents of an ordinary file. This could refer to information about a directory, or pointers to the location of the files it contains.

Each Frangipani server has its own private log in Petal and before making a metadata update, it creates a log record describing the changes and appends the record to its in-memory log. This log is then written to Petal before the actual metadata is modified in its permanent location.

Frangipani assigns a version number for a metadata block each time it gets updated. A metadata update could span multiple blocks, and for each block that a log record updates, the record contains a description of the changes and the new version number.

Frangipani does not log user data #

Note that Frangipani does not log the user data, only metadata is logged. This means that if a user on a workstation writes data to a file in its cache and the workstation crashes immediately, the recently written data may be lost. This is the same property on ordinary Unix file systems today. Applications that need stronger recovery guarantees can call fsync to flush the cache to disk immediately a file is written.

For example, if a user adds a new file with contents to a directory, Frangipani will log that a new file has been added to the directory, but it will not know the file contents until the cached data is flushed to disk. Note that if another workstation had been granted a read lock for the file before the crash happened, Frangipani’s locking protocol guarantees that the user changes from the original server must have been written to disk beforehand.

Recovery #

There is a recovery daemon which helps to manage the recovery of failed servers. A failure can be detected in two ways:

- When the lock service asks for a lock back and does not get a reply.

- A client of a Frangipani server not receiving a response.

When a Frangipani server crashes while holding locks, the locks that it owns cannot be released without performing the necessary recovery actions. Specifically, the crashed server’s logs must be processed and any pending updates must be written to Petal.

The lock service performs recovery by asking another Frangipani server to process the crashed server’s logs and apply pending updates. The recovery server is itself granted a lock to ensure exclusive access to the crashed server’s log.

Frangipani uses the version number attached to each metadata block to ensure that recovery never replays a log record that describes an update which has already been completed.

Frangipani vs GFS #

GFS is another system which has been covered earlier in a post for this course. Although they bear a similarity in that they are both distributed file systems, a major architectural difference is that GFS does not have caches, since its goal is good performance for sequential reads and writes of large files that are too big to fit in a cache. As a result, it needs no cache coherence protocol and its clients are relatively simple, unlike Frangipani workstations.

Another difference is that unlike Frangipani which presents itself as an actual file system, applications have to be explicitly written to use GFS via library calls. In other words, Frangipani runs at the kernel level while GFS runs at the application level.

Conclusion #

Although some organizations today still store user and project files on distributed file systems, their importance has waned with the rise of laptops (which must be self-contained) and commercial cloud services. Also, the rise of web sites, big data, and cloud computing has shifted the focus of storage system development from file servers to database-like servers which provide a key/value interface.

However, Frangipani still presents some interesting ideas around cache coherence, distributed crash recovery, distributed transactions, and how these all interact with each other. Note that there are some limitations in its design, such as how locks are held on entire files/directories and the possibility of redundant logging since Petal also maintains its own log.

Further Reading #

- Frangipani: A Scalable Distributed File System - Original paper by Chandramohan A. Thekkath, Timothy Mann, and Edward K. Lee published in 1997.

- Lecture 11: Frangipani - MIT 6.824 Lecture Notes.

- Cache coherence by Gary Shute.